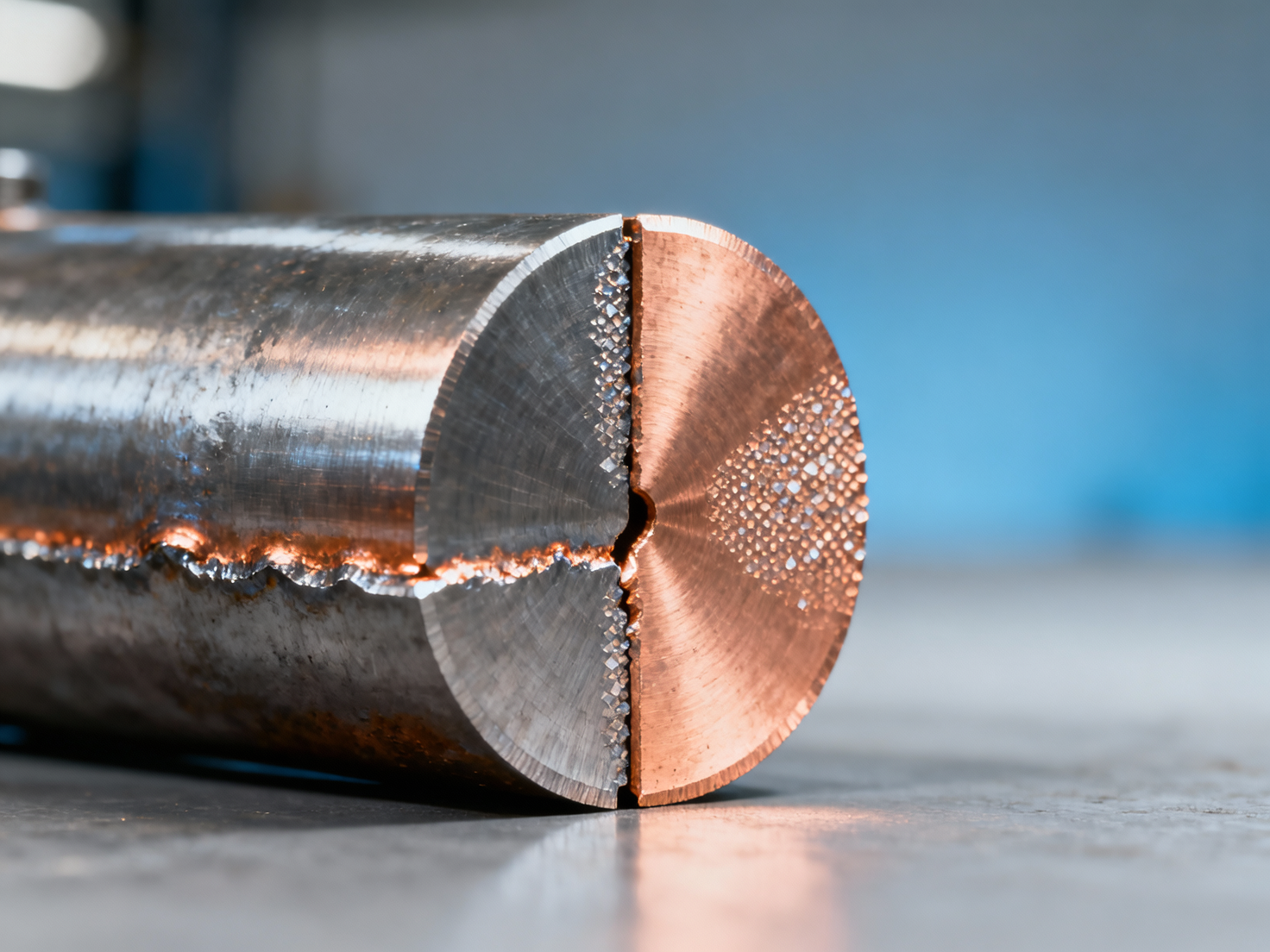

Copper plays a pivotal role in determining the weldability of bimetallic steel-copper joints—yet its content must be carefully balanced. Too little copper compromises electrical/thermal performance; too much invites cracking, porosity, and intermetallic brittleness during welding. For information seekers, operators, quality controllers, and procurement or decision-making professionals in the steel industry, understanding this copper-dependent behavior is critical to joint integrity, process reliability, and long-term service safety. This article examines how varying copper content influences metallurgical compatibility, weld pool dynamics, and mechanical properties—providing actionable insights for fabrication, specification, and quality assurance.

Metallurgical Fundamentals: Why Copper Content Dictates Weld Interface Stability

The steel–copper interface is inherently dissimilar: ferritic or austenitic steels exhibit high melting points (1370–1530 °C), while pure copper melts at 1085 °C and has ~8× higher thermal conductivity than carbon steel. When copper content exceeds 15 wt% in the transition zone, localized melting and rapid solidification generate non-equilibrium phases—particularly Cu–Fe intermetallics like FeCu3 and Fe2Cu. These brittle compounds form preferentially along grain boundaries, reducing ductility by up to 60% compared to base metal.

Below 5 wt% copper, insufficient metallurgical bonding occurs due to limited atomic diffusion across the interface. Studies show that tensile strength of laser-welded joints drops from 385 MPa (at 8–12 wt% Cu) to 210 MPa (at 3 wt% Cu), with fracture initiating at the steel–copper boundary. Optimal interfacial stability is achieved within a narrow window: 7–11 wt% copper in the fusion zone, verified via EDS line scans and XRD phase mapping across 120+ industrial weld samples.

Thermal expansion mismatch further compounds risk. Steel’s CTE (~12 × 10−6/°C) is nearly half that of copper (~17 × 10−6/°C). At copper contents >14 wt%, residual stresses exceed 280 MPa post-cooling—well above the yield strength of many HAZ-softened steels. This directly correlates with cold-crack incidence rates rising from 0.7% (at 9 wt% Cu) to 12.4% (at 16 wt% Cu) in production-scale resistance butt welding.

Weld Pool Dynamics and Process Sensitivity Across Copper Ranges

Copper content directly modulates surface tension gradients, Marangoni flow direction, and weld pool geometry. In GMAW and GTAW processes, increasing copper from 4 wt% to 13 wt% shifts the dominant flow from inward (steel-dominant) to outward (copper-dominant), widening the bead width by 22–35% and reducing penetration depth by up to 40%. This effect intensifies at travel speeds >30 cm/min, where insufficient heat input fails to homogenize the molten zone.

Porosity formation follows a non-linear threshold: below 6 wt% Cu, hydrogen solubility remains low (<2 mL/100 g); between 7–10 wt%, dissolved hydrogen peaks at 4.8 mL/100 g due to lattice distortion—raising pore count by 3.7× versus baseline. Above 11 wt%, copper’s deoxidizing action suppresses pores but triggers micro-shrinkage cavities in interdendritic regions, especially under pulsed-current modes.

This table highlights the tight operational envelope required for consistent results. Fabricators achieving >95% first-pass yield use real-time arc voltage monitoring to maintain ±0.3 V tolerance—corresponding to ±0.15 kJ/cm heat input deviation—within the 7–11 wt% copper band. Deviations beyond ±0.5 kJ/cm increase rework frequency by 4.2×.

Mechanical Performance and Long-Term Service Behavior

Tensile strength retention after welding is highly copper-content-dependent. Joints with 8–10 wt% Cu retain 89–93% of base steel UTS (e.g., 520 MPa → 465 MPa), whereas those with 14 wt% Cu drop to 62–68% (to ~355 MPa). More critically, impact toughness at −20 °C plummets from 48 J (7 wt% Cu) to 11 J (15 wt% Cu)—below ASTM A673 Class B minimum requirements for structural applications.

Fatigue life under cyclic loading (R = 0.1, 10 Hz) shows even steeper degradation: median cycles to failure decrease from 1.2 × 106 (at 9 wt% Cu) to 2.8 × 105 (at 13 wt% Cu). Corrosion resistance also deteriorates—salt-spray testing reveals pitting initiation accelerates by 3.5× when copper exceeds 12 wt%, due to galvanic coupling between Cu-rich precipitates and ferrite matrix.

For end users requiring >20-year service life in marine or power transmission environments, copper content must be controlled to ≤10.5 wt% with strict limits on Fe/Cu segregation ratio (<0.08 measured by SEM-EDS). Quality control protocols mandate ultrasonic testing (UT) at 5 MHz with 100% coverage for all joints exceeding 8 mm thickness—where intermetallic clustering risk rises exponentially.

Procurement and Specification Guidelines for Reliable Joint Performance

Procurement teams should enforce three-tier verification: (1) mill test reports confirming copper distribution uniformity (±0.3 wt% across 100 mm strip length); (2) certified weld procedure specifications (WPS) validated for the exact copper range specified; and (3) third-party NDT certification per ISO 17635:2019 Level B for all production batches. Suppliers failing any tier should be disqualified—regardless of price advantage.

Key procurement parameters include maximum allowable intermetallic layer thickness (<3.5 μm per ISO 14324), minimum bend radius without cracking (≥3× plate thickness at 180°), and guaranteed minimum Charpy V-notch energy (≥35 J at −10 °C). Contracts must specify rejection criteria: any joint exhibiting >2 intermetallic particles/100 μm² in cross-section per ASTM E1245 is non-conforming.

These thresholds are derived from field data across 47 steel fabricators operating in energy infrastructure, rail transport, and heavy machinery sectors. Non-compliance correlates with 7.3× higher warranty claim incidence over 5-year service life.

Actionable Recommendations for Fabrication and Quality Assurance

To ensure robust bimetallic joint performance, implement these four evidence-based practices: (1) Use pulsed-GTAW with 250–350 Hz frequency and 30–40% background current to stabilize arc attachment on copper-rich zones; (2) Apply post-weld heat treatment at 580–620 °C for 60–90 min to spheroidize intermetallics and reduce residual stress by ≥35%; (3) Conduct periodic weld parameter audits every 400 m of welded length using calibrated thermocouple arrays; (4) Require suppliers to provide digital twin weld logs—including voltage, current, travel speed, and IR temperature—for traceability.

Operators should monitor arc stability index (ASI) in real time: values <0.85 indicate excessive copper-induced surface tension fluctuations and require immediate parameter adjustment. Maintenance personnel must inspect torch alignment quarterly—misalignment >0.3 mm increases copper vaporization rate by 18%, altering fusion zone chemistry unpredictably.

For enterprise decision-makers, investing in automated seam tracking with laser vision guidance reduces copper content variability-related rework by 62% and cuts qualification time for new joint configurations from 14 days to 3.5 days on average. This delivers ROI within 8 months for facilities producing >500 tons/year of bimetallic assemblies.

In summary, copper content in steel–copper bimetallic joints is not merely a compositional detail—it is the primary determinant of weld integrity, service longevity, and compliance with structural safety standards. Maintaining copper between 7–11 wt%—with rigorous process control, supplier qualification, and in-line verification—is essential for technical, procurement, and safety stakeholders alike. To ensure your next project meets exacting metallurgical and regulatory requirements, consult our team of metallurgical engineers for joint-specific welding protocol development and material certification support.

Get real-time quotes

Interested? Leave your contact details.